AI Code Assistants Are Your Friend, Not Your Replacement — App Development

AI has the potential to make developers more efficient at nearly every task, from code generation, review, optimization, debugging, and even documentation writing. Studios can start using AI to review and manage code immediately, reducing tedious work and identifying issues developers may miss.

However, AI can generate vulnerable or unworkable code, and copyright rules around AI-generated code are still unclear. Due to these concerns, AI-generated code should always be reviewed by a human developer, and studios may want to proceed with caution when using AI-generated code in their final products.

Code Generation

AI code generators can help developers come up with solutions when they are stuck or quickly create foundational code. These tools are controlled with natural language prompts, like ChatGPT, so they are especially useful for developers working in unfamiliar languages. Most AI tools can translate code from one programming language to another, which greatly speeds up reusing code across projects on different platforms. And many AI tools can write effective test cases to validate existing code, freeing up developers to spend more time on productive code.

However, AI will often produce code that contains bugs, doesn’t function as intended, or won’t run at all. A recent study found that three of the top AI tools only generated correct code a fraction of the time: ChatGPT (65.2%), GitHub Copilot (46.3%), and Amazon CodeWhisperer (31.1%). Other studies have warned that AI-generated code can have security vulnerabilities. Due to these issues, AI-generated code should be viewed as an assistant for developers rather than a replacement.

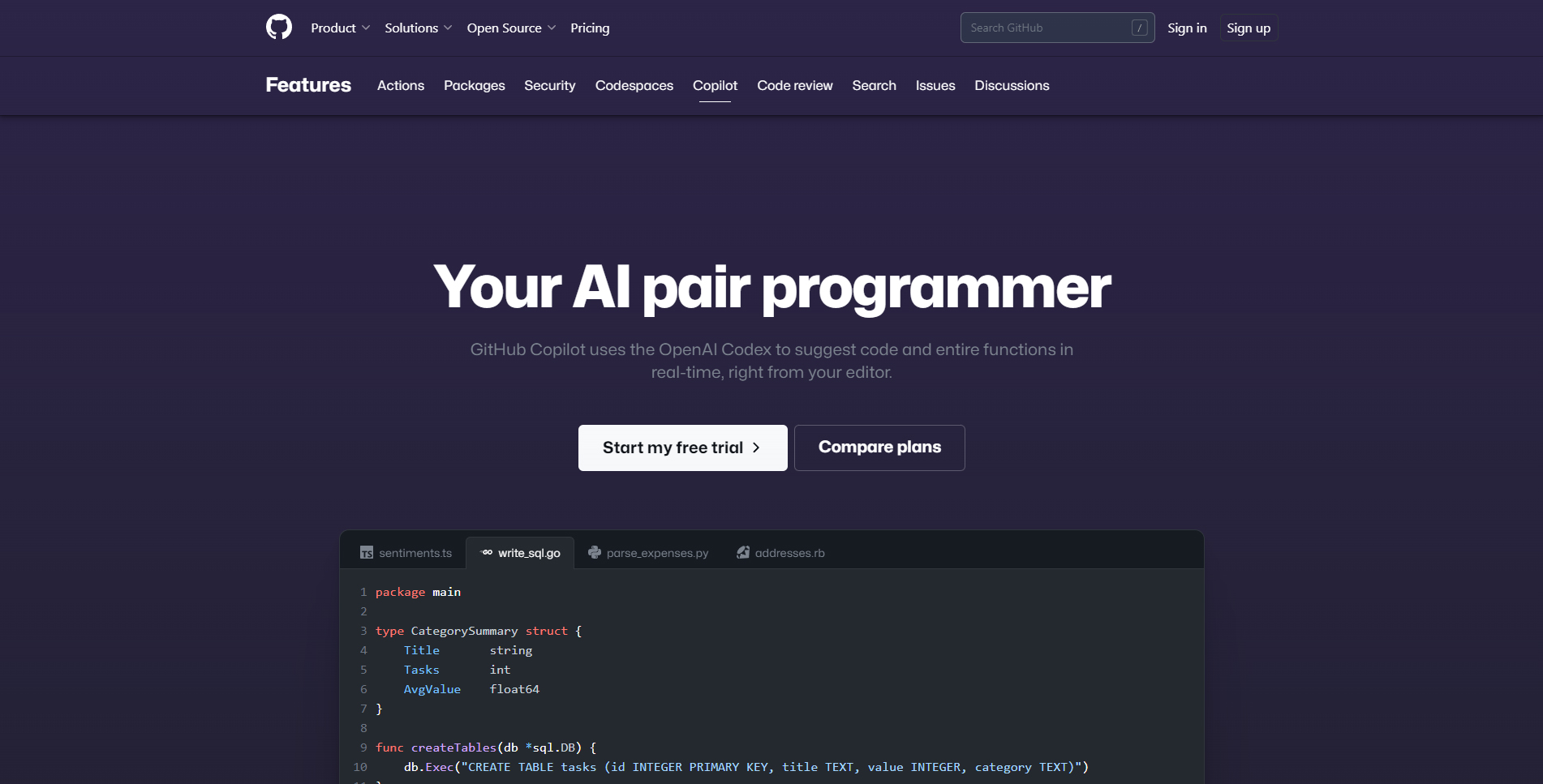

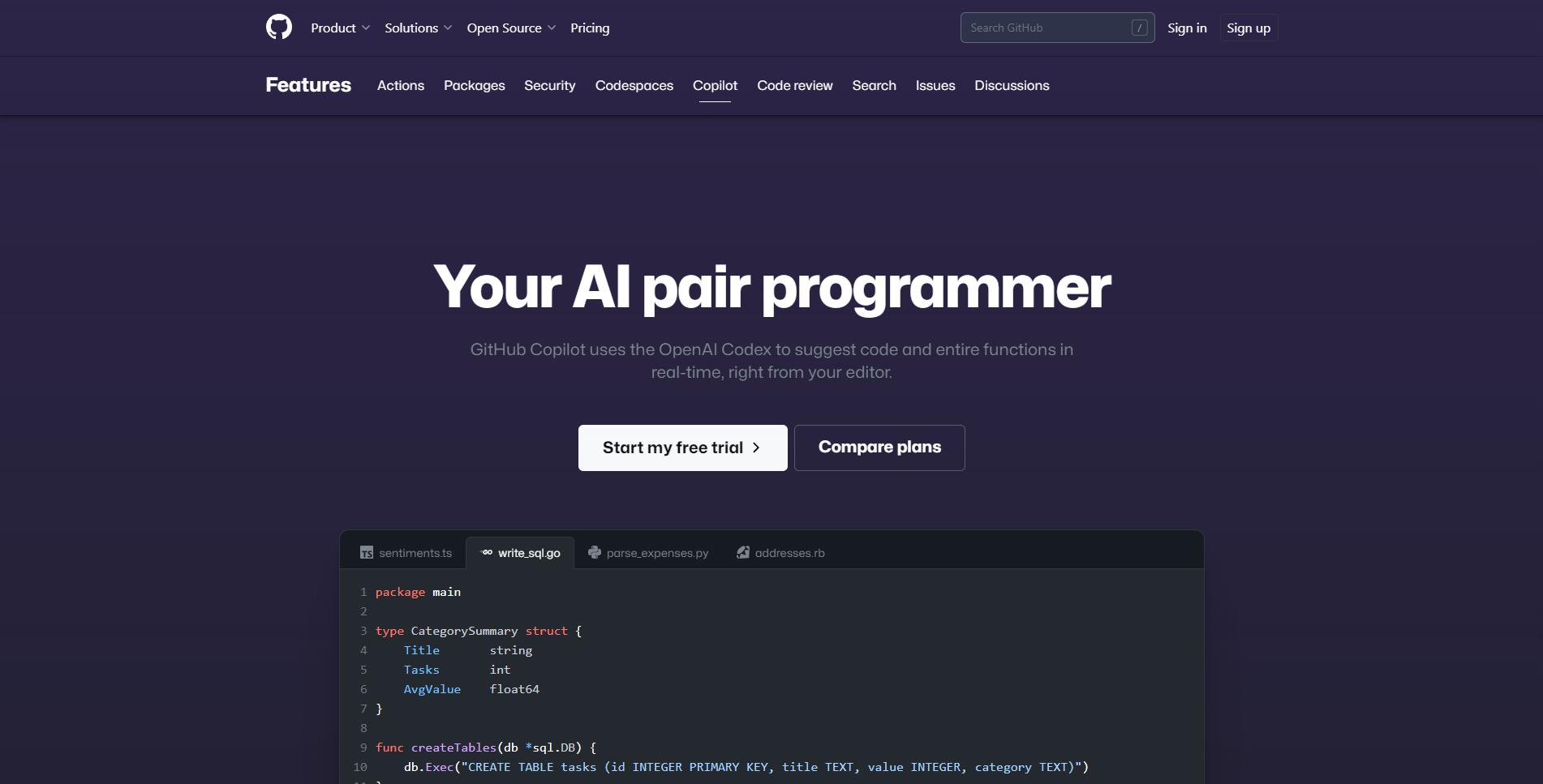

One of the best ways AI can increase productivity is through code prediction and completion. With these tools, AI suggests code that can complete tasks based on developers’ current actions and documentation (image, image). Developers can cycle through multiple suggestions and accept part or all of the suggested code.

According to GitHub, the amount of developers’ code being generated by the GitHub Copilot tool has increased from 27% in June 2022 to 46% in February 2023, which suggests developers are pleased with the results. In one study, Copilot was able to increase developers’ coding speed by 55%.

In gaming, Roblox has its own code completion AI for UGC creators called Code Assist. The beta feature has seen strong adoption within the Roblox ecosystem. Since Code Assist’s beta release in March 2023, Code Assist has been used by 41% of beta users. The amount of inserted code has increased by 35%, and deleted code suggestions have decreased by 14%. Roblox also launched a Material Generation AI tool in March. It has been used by 36% of beta users and corresponded with a 50% daily increase in users creating material variations. You can read more about AI-generated art in our report.

Review, Optimization, & Debugging

AI can help developers review and manage existing code, allowing developers to focus more on creating new code. Specialized AI tools expedite code reviews by scanning repositories for potential issues and then suggesting code solutions and actionable feedback. These tools not only detect bugs and security vulnerabilities but also look for deeper issues that could affect the code’s reliability. Developers can use AI to identify performance bottlenecks, remove redundant code, improve maintainability and readability, and suggest other optimizations.

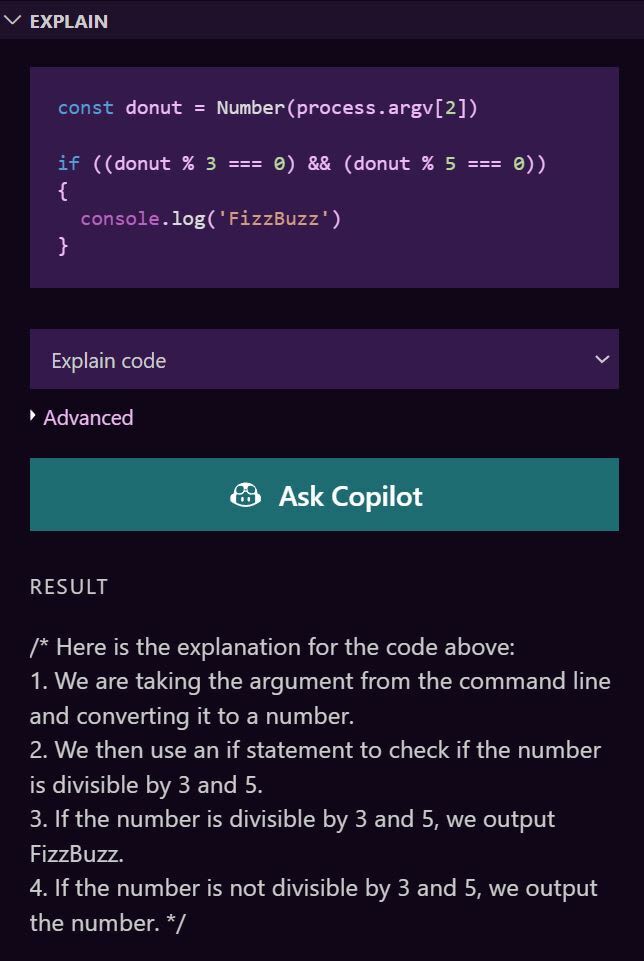

AI can also help teams of developers stay on the same page. Many AI tools can generate natural language documentation for code, reducing the time developers spend summarizing their code’s functionality. AI can even suggest ways to keep code style consistent across teams to improve collaboration. And some AI tools, such as ChatGPT and GitHub Copilot’s “Explain” feature, allow users to get straightforward explanations of code they don’t understand, which is particularly useful for developers trying to understand code written by other teammates (image).

Key Products

ChatGPT is a natural language chatbot from OpenAI that can be used to generate, explain, and review code. Version 3.5 is available for free for all users. A $20 monthly subscription to ChatGPT Plus gives users faster response time, availability even when demand is high, and priority access to new features. The latest GPT model, GPT-4, is in beta and is only available for ChatGPT Plus members (image). A waitlist for the GPT-4 API is available on the developer’s site.

GitHub Copilot is a coding assistant created in collaboration with OpenAI (image). Copilot uses the OpenAI Codex, which was built on GPT-3, to suggest code through extensions for Visual Studio Code, Visual Studio, Neovim, and JetBrains’ suite of integrated development environments (IDEs). It supports a variety of coding languages, but it is especially proficient in Python, JavaScript, TypeScript, Ruby, Go, C#, and C++. Copilot costs $19 monthly for businesses.

Note: OpenAI Codex was discontinued by OpenAI on March 23 due to newer, more powerful GPT models. However, GitHub announced GitHub Copilot X the day before. Copilot X is a new version that will incorporate GPT-4 as well as a chat terminal, pull request support, and other improvements. A waitlist is available on their site. In the meantime, the prior version of Copilot remains operational.

Amazon CodeWhisperer is a coding assistant trained on open-source and Amazon code (image). Like Copilot, it can generate suggestions directly in IDEs, including Amazon Web Services (Cloud9, Lambda, and SageMaker Studio), Visual Studio Code, IntelliJ IDEA, PyCharm, and JupyterLab. CodeWhisperer supports 15 programming languages: Python, Java, JavaScript, TypeScript, C#, Go, Rust, PHP, Ruby, Kotlin, C, C++, Shell scripting, SQL, and Scala. CodeWhisperer costs $19 monthly per user for businesses and includes 500 code security scans a month per user.

Visual Studio IntelliCode is a coding assistant extension developed by Microsoft (image). IntelliCode was trained on open-source GitHub code, provides live suggestions like Copilot and CodeWhisperer, and is currently free for all users. The Visual Studio 2022 extension supports C#, C++, Java, SQL, and XAML, whereas the Visual Studio Code extension supports TypeScript, JavaScript, and Python.

Tabnine is a coding assistant that Codota acquired in 2019 and merged with their own AI coding technology (image). Tabnine is similar to other assistants, but it also learns each individual’s coding patterns and style to improve its suggestions over time. An enterprise plan even allows users to train models on their own code repositories. Tabnine supports many popular IDEs, including Visual Studio, IntelliJ, Sublime, PyCharm, Neovim, and JupyterLab. It also supports over a dozen languages, including Python, C, C++, C#, Java, JavaScript, Typescript, and Ruby. Tabnine’s Pro tier costs $12 monthly per user and has a limit of 23 users. Enterprise pricing is available upon request.

Codiga is a customizable code analysis tool that provides automated code quality reviews (image). Codiga scans use over 2,000 preset rules that cover security, performance, and best practices, while also allowing users to create their own rules. The security tool integrates into IDEs, such as Visual Studio and JetBrains, as well as source code repos, including GitHub, GitLab, and BitBucket. Codiga supports rule sets for over a dozen languages, including Python, C, C++, JavaScript, and TypeScript. Codiga costs $14 monthly per user.

DeepCode AI is a collection of security models that power the Snyk platform (image). Unlike chatbots and code assistants, which were developed primarily for generation, DeepCode was specifically designed to find and fix vulnerabilities. Snyk provides extensions for Visual Studio Code, Eclipse, and all major JetBrains IDEs, including IntelliJ IDEA, WebStorm, PyCharm, GoLand, PhpStorm, Android Studio, AppCode, Rider and RubyMine. Within Synk, DeepCode supports 11 programming languages, including Python, C, C++, Java, JavaScript, and TypeScript. Snyk’s free tier provides 100 code tests per month, and the Team tier costs $52 monthly per user (sold in bundles of 5 users) and provides unlimited tests and Jira integration.

AlphaCode is a coding assistant tool developed by Google DeepMind (image). The model made waves in 2022 for beating human programmers in multiple coding competitions. AlphaCode is part of Google’s Research team and hasn’t been officially launched, but they’ve posted some of their research on their blog.

IBM Watson Code Assistant is part of IBM’s suite of AI products (image). The tool is scheduled to be released later in 2023, with the first use case focused on AI-generated code suggestions for Ansible Playbooks. More information and a waitlist are available on IBM’s site.

IP Considerations

Although no legal precedent has been established regarding AI-generated code, the most cautious studios should consider training their own AI models or restricting AI to non-generative uses for the time being. There are new licenses that aim to clarify how code can be used by AI, but it’ll likely take time for developers to update their code with these licenses and for models to be retrained.

On March 16th, 2023, the US Copyright Office released guidance stating that AI-generated works cannot be copyrighted because they are not human-authored works. To qualify for copyright, AI code must be materially modified by human developers.

There are also several lawsuits against generative AI companies claiming that AI models were unlawfully trained on copyrighted works. While many AI models were trained on open-source code, some open-source licenses have limitations on use or require attribution. And since GitHub and Amazon even admit that their models may suggest code that’s identical to snippets from their training set, developers using AI-generated code could taint their own code with license violations. Copilot and CodeWhisperer offer flagging tools that can identify identical code, but it’s unclear if these tools are thorough enough to catch every instance.